DeepSource: Next

Introducing AI Review on DeepSource.

- By Jai Pradeesh, Sanket Saurav

- ·

- Company

- Announcement

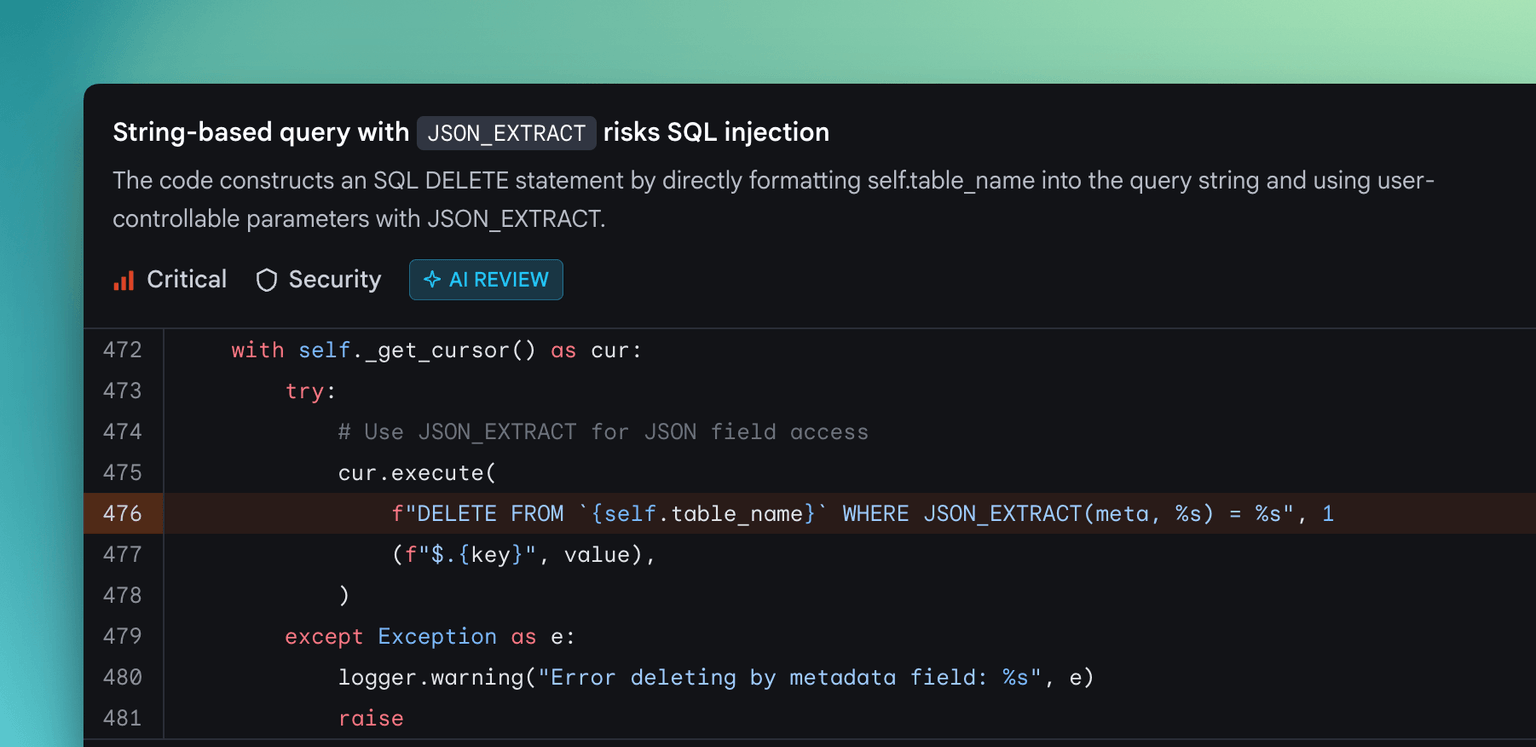

Today we're launching AI Review on DeepSource. This is the biggest change to the platform since we started the company.

We've spent the last several years building static analysis infrastructure used by thousands of engineering teams. Today, we're putting an AI code review agent on top of it, which has structured access to data-flow graphs, taint maps, control-flow analysis, and 5,000+ static analyzers worth of findings before it can start reasoning about code on its own.

On the OpenSSF CVE Benchmark, this hybrid engine is the most accurate code review tool we've tested — ahead of Claude Code, OpenAI Codex, Devin, Cursor BugBot, Greptile, CodeRabbit, and Semgrep. We've published the raw data, methodology, and every judge verdict publicly.

AI Review is available now on the new Team Plan. Read the changelog for everything that's new.

Why a hybrid engine?

LLM-only code review can reason about code, but it has a blind spot: it doesn't always look at the right things. When we benchmarked LLM-only tools against real CVEs, the most common failure wasn't wrong analysis. It was zero output. The model skipped the vulnerable code entirely.

Static analysis alone has the opposite problem. It checks everything, every time, but it can't reason beyond the patterns it's been programmed to find.

We wanted both. So we built a system where they work together:

- 5,000+ static analyzers run first, catching known vulnerability classes and establishing a high-confidence baseline.

- Our extended static anlaysis harness builds code intelligence stores — data-flow graphs, control-flow graphs, taint source-and-sink maps, reachability analysis, import graphs, and per-PR ASTs.

- The AI Review agent is seeded with the baseline findings and can query the stores as tools during its review.

When our AI agent reviews your code, it's not reading source files in isolation or relies only on grep-based exploration. It has structured access to how data moves through your application, which paths are reachable, where untrusted input enters and where it ends up. The static analysis grounds the agent, and the AI extends into territory rules can't cover: business logic flaws, subtle injection vectors, vulnerabilities that require reasoning across function and file boundaries.

Code review humans and agents

In a world where AI agents write most of the code, catching bugs in a diff isn't enough. The agent writing the code needs a complete feedback signal to self-correct.

That means security issues, yes. But also code coverage gaps, leaked secrets, dependency vulnerabilities, license risks, baseline regressions. One platform, one feedback loop, not seven different tools stitched together.

DeepSource gives your agents (and the humans overseeing them) that full signal.

AI + static review, code coverage tracking, secrets detection, SCA, license compliance, baseline issue tracking, OWASP/SANS reporting, and flexible PR gates. Accessible on our web app, SCM surfaces like GitHub PRs, our GraphQL API, our CLI, and very soon, our official MCP server.

Get started

The new Team Plan includes everything: unlimited static analysis, code coverage, secrets detection, reporting, integrations, and $120/year in bundled AI Review credits per contributor. Pay-as-you-go after that. No seat minimums. 14-day free trial, no credit card required.

If you're already a DeepSource customer, you can migrate to the new experience from your dashboard.